Ether

AI Tutoring Platform

Turned an AI reflective assistant from a concept into something usable. Defined how it works, where it needs guardrails, and what belongs in the MVP.

Role: Lead Product Designer

Scope: Interaction model, AI guidance system, MVP definition

Team: PM, 2 Engineers

The Problem

Conversational AI Without Structure

People were into it.

Early testing showed users were having long, reflective conversations with AI.

The kind where you think, “okay… this is kind of working.”

But then you zoom out.

The outcomes were inconsistent.

Open-ended chat created a few predictable problems:

-

People didn’t know how to get anything useful out of it

-

Conversations drifted and never really resolved

-

The system’s boundaries were fuzzy at best

So instead of feeling like a tool, it started to feel like something you try once, think is interesting… and then don’t come back to.

The real challenge was figuring out how to keep that sense of openness, while giving the experience enough structure to actually lead somewhere.

Operating Constraints

• Early-stage AI product

• Undefined interaction paradigm

• Limited engineering capacity

• Sensitive user inputs

• Need to define MVP quickly

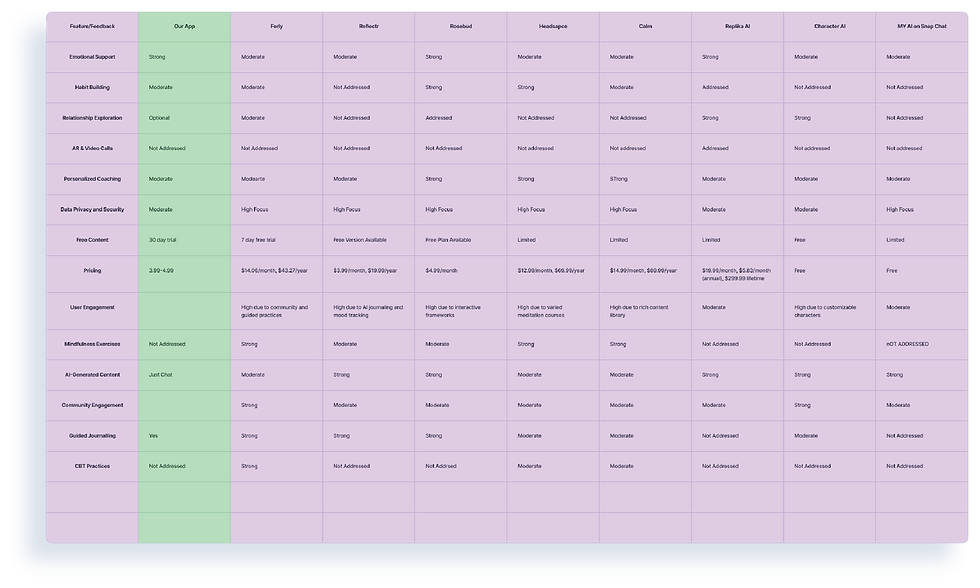

Research Insights that helped

-

Users want emotional validation but also practical direction.

-

Blank journaling increases abandonment; guided prompts increase completion.

-

Visible progress reinforces continued engagement.

These insights suggested that free-form chat alone would not sustain long-term usage.

Methods: Qualitative User Interviews, Diary Study, Quantitative Survey Participants: 150 Diverse Gen Z

Exploration & Hypothesis Testing

Hypothesis: Structured conversational guidance would improve clarity and repeat usage over fully open-ended chat.

We tested:

-

Open chat with persona guides

-

Guided topic selection

-

Structured reflection loops

-

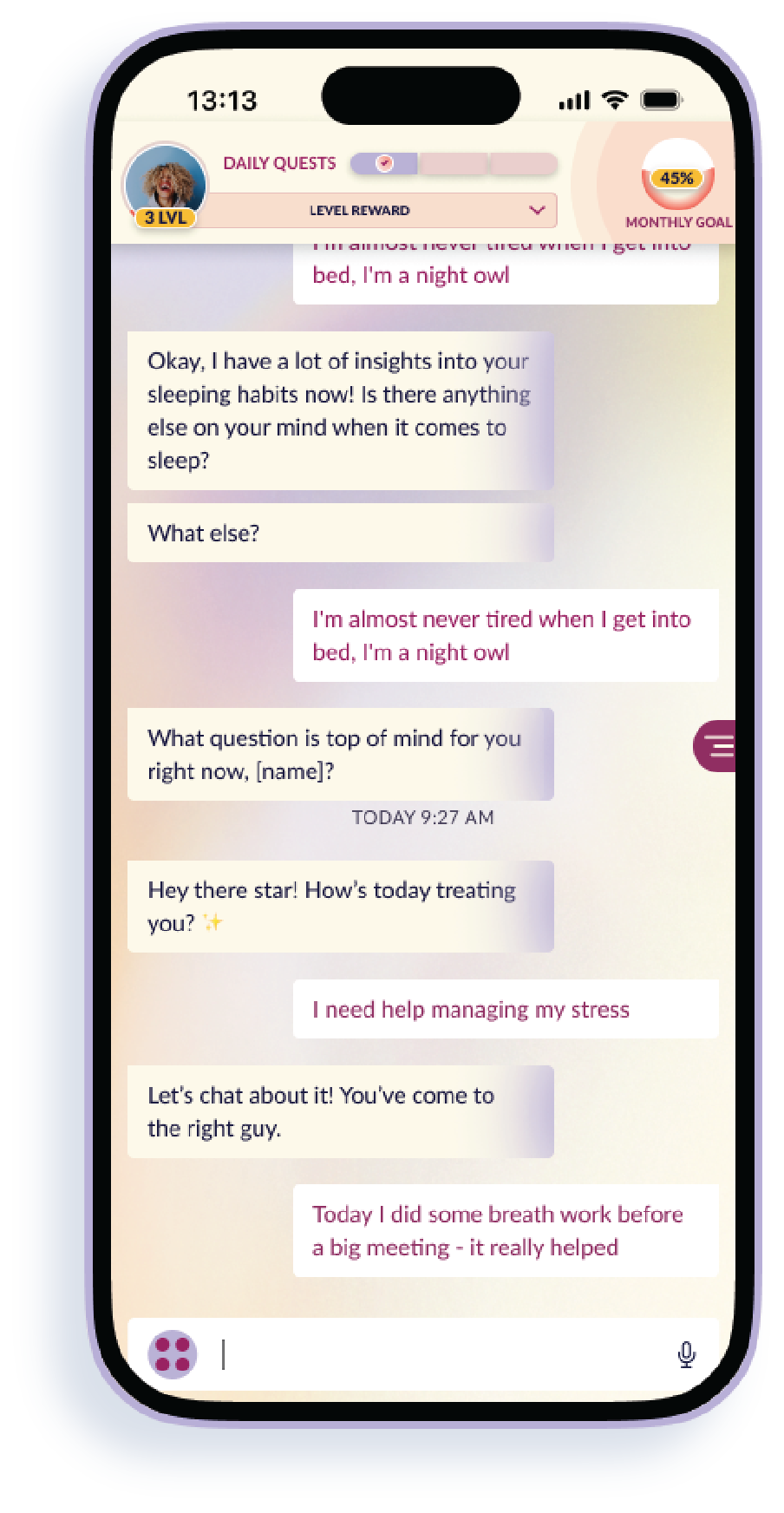

Micro-prompts and daily quests

Learning: Open chat increased message volume. Guided flows increased session completion and perceived usefulness.

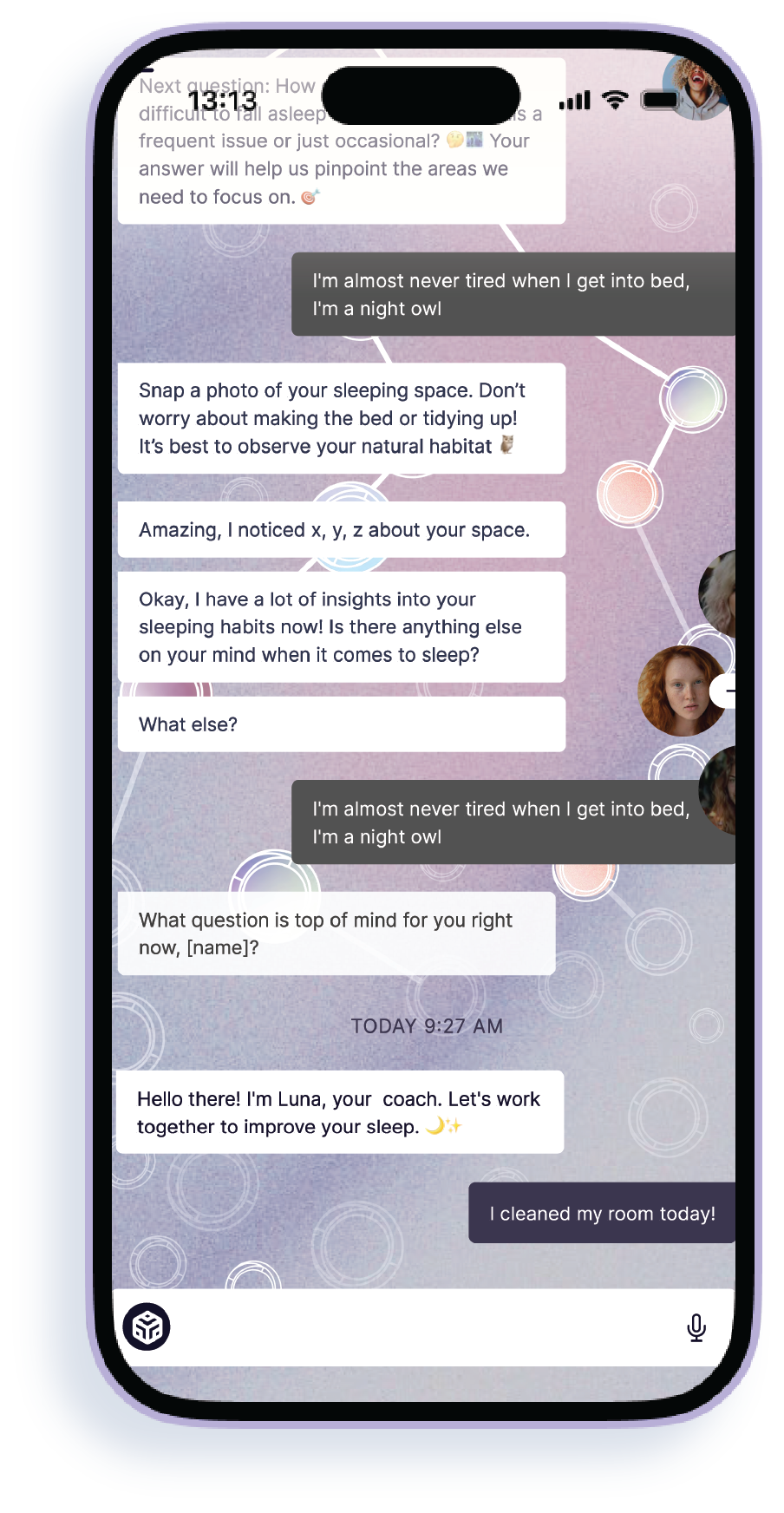

Defining the Interaction Model

We pulled back from fully open, persona-driven chat and gave the experience some structure:

-

Topic-based entry points

-

Structured conversational loops

-

Optional freeform expansion

-

Clear session completion

-

Visible progress markers

Not to make it rigid. Just to make it work.

A little constraint went a long way.

Conversations became clearer, outcomes more consistent, and users had a much better sense of progress and trust.

Designing for Boundaries

To keep things from getting weird or overstepping, we added some guardrails.

• Avoided sounding overly authoritative or medical

• Defined clear refusal and redirection behavior

• Put limits on how far personalization could go

• Cut down on guide overload

• Made the system’s limits obvious in the UI

Because with AI, more isn’t always better.

If people don’t understand the boundaries, they stop trusting it.

MVP Definition & Trade-offs

Shipped:

• Guided conversational flows

• Basic progress tracking

• Prompt library

• Subscription framework

• Minimal-effort entry paths

Deferred:

• Deep memory persistence

• Advanced branching logic

• Social layers

• Expanded gamification

Given engineering constraints and trust considerations, we prioritized structural clarity over experiential depth.

Aligning on Interaction Philosophy

Early on, there was a bit of a tug-of-war:

• Let people explore freely

• Or guide them through something more structured

Both sound good. Together, they get messy.

Through a few working sessions, we made some calls:

• Cut down the number of guides

• Make progress visible

• Define what “done” actually means

Once that was clear, everything got easier to build.

Less ambiguity, fewer edge cases, and a much more focused experience.

Outcomes:

-

Built an interaction architecture that could scale

-

Cut down on conversational drift and got more sessions to actually finish

-

Added guardrails so the AI stayed useful without overstepping

-

Turned the MVP into something clear and buildable for engineering

Key Learnings:

A few things we learned (some faster than others):

-

AI personality is a balancing act. Too strong and it overreaches. Too neutral and it’s useless

-

People don’t actually want a blank page. Structured prompts work better

-

If you don’t define guardrails early, personality just makes things messier

-

Clarity beats novelty. Every time

Role: Lead Product Designer

Scope: Interaction model, AI guidance system, MVP definition

Team: PM, 2 Engineers